Executive Summary

The Core Reasoning Assessment Suite is an innovative set of cognitive ability assessments developed by Deeper Signals to help organizations identify, select, and develop top talent. Comprising three adaptive assessments - Logical, Numerical, and Verbal Reasoning - the suite provides a comprehensive measure of the fundamental reasoning skills that underpin career success, learning agility, and leadership potential.

Reasoning ability is one of the strongest predictors of workplace performance, adaptability, and long-term growth. Decades of research demonstrate that employees with higher levels of cognitive ability not only perform better across roles but also learn more quickly, innovate effectively, and adapt to the evolving demands of modern organizations. The Core Reasoning Suite is designed to bring this science into practice, giving organizations evidence-based insights for hiring, development, succession planning, and workforce design.

Each assessment in the suite is built with the same core principles:

- Adaptive and Efficient: Delivered through Computerized Adaptive Testing (CAT), reducing test length while maintaining measurement precision;

- Fair and Accessible: Designed to minimize cultural, linguistic, and educational biases, ensuring fairness across diverse populations;

- Psychometrically Robust: Calibrated with modern Item Response Theory (IRT) methods to ensure reliability, validity, and accuracy across the full spectrum of ability;

- Actionable and Ethical: Provides interpretable insights for organizations and individuals, with a focus on responsible use and compliance with industry standards.

Logical Reasoning

The Core Logical Reasoning Assessment (CLRA) measures abstract problem-solving and pattern recognition through non-verbal puzzles. It has been validated with a global sample of over 4,000 participants, showing strong reliability, convergent validity with established measures of cognitive ability, and no evidence of adverse impact across age, gender, and ethnicity. The CLRA leverages automatic item generation to algorithmically create new matrix reasoning items at varying levels of difficulty. This technique allows the assessment to evolve and improve over time, expanding the item bank and strengthening measurement accuracy with each recalibration.

Numerical Reasoning

The Core Numerical Reasoning Assessment (CNRA) measures the ability to interpret, analyze, and draw logical conclusions from quantitative data, including arithmetic reasoning, number series, and number matrices. A robust item bank of 290 items has been developed under strict fairness and neutrality guidelines.

Verbal Reasoning

The Core Verbal Reasoning Assessment (CVRA) measures the ability to understand, analyze, and evaluate information presented in written form, with item types including analogies, syllogisms, critical reasoning, assumption recognition, and argument evaluation. A robust item bank of 262 items has been developed under strict fairness and neutrality guidelines.

Roadmap and Continuous Improvement

While the suite is already positioned as a complete offering, Deeper Signals is committed to ongoing validation and enhancement. Planned initiatives we are exploring include:

- Expanding and recalibrating item pools to strengthen measurement precision.

- Growing normative samples to ensure global and role-specific relevance.

- Conducting criterion-related validity studies to further demonstrate predictive power for job performance and other critical organisational constructs.

- Running regular fairness audits to ensure assessments remain free of bias and adverse impact.

Introduction to Core Reasoning

Core Reasoning is a suite of adaptive assessments designed to measure cognitive ability — the ability to analyze novel problems, recognize patterns, think critically, and solve complex tasks. Cognitive ability is among the strongest predictors of job performance, learning agility, and adaptability in modern, dynamic workplaces (Chamorro-Premuzic & Furnham, 2010; Sackett et al., 2022). Unlike knowledge or skills that are specific to a role, reasoning ability is broadly applicable across jobs, industries, and career levels.

The Science of Reasoning

Meta-analytic research consistently demonstrates that cognitive ability is among the strongest predictors of career success, including job performance, occupational attainment, training success, and leadership effectiveness (Schmidt & Hunter, 1998; Judge, Colbert, & Ilies, 2004; Sackett, Zhang, Berry, & Lievens, 2022). Specifically, cognitive ability correlates positively with job performance across virtually all job types, with validity coefficients typically ranging between .50 to .65. This relationship remains strong even after controlling for other factors such as experience and education (Schmidt & Hunter, 1998; Sackett et al., 2022).

The Core Reasoning suite is designed to measure this capacity directly, through three complementary domains: Logical, Numerical, and Verbal Reasoning.

Logical Reasoning consists of non-verbal, abstract items designed to minimize cultural and linguistic bias while accurately measuring problem-solving ability. Inspired by Raven’s Progressive Matrices, the assessment focuses on pattern recognition and rule-based reasoning in a purely visual format. Items fall into two primary domains:

- Inductive and Deductive Reasoning: Assessing the ability to detect logical patterns, apply rules, and solve novel problems without relying on prior knowledge.

- Visual–Spatial Reasoning: Evaluating how effectively individuals interpret and manipulate visual information, identify spatial relationships, and generalize across abstract designs.

Numerical Reasoning consists of quantitative items designed to measure how individuals process, interpret, and reason with numerical information. The assessment emphasizes logical operations over rote calculation, ensuring that performance reflects reasoning ability rather than prior mathematical training. Items fall into two primary domains:

- Quantitative Problem-Solving: Assessing the ability to draw logical conclusions from numerical patterns, number series, and arithmetic-based reasoning tasks.

- Data Interpretation: Evaluating the capacity to analyze charts, tables, and structured quantitative information to identify trends and make sound inferences.

Verbal Reasoning consists of language-based items designed to evaluate reasoning with written material while minimizing the influence of vocabulary breadth or cultural knowledge. The assessment focuses on critical thinking with verbal information, ensuring fairness through plain language and context-neutral content. Items fall into two primary domains:

- Analytical Reasoning with Language: Assessing the ability to recognize relationships, draw logical inferences, and evaluate arguments based on written text.

- Deductive and Critical Reasoning: Evaluating the ability to identify assumptions, test validity of conclusions, and interpret or challenge arguments.

Together, these assessments provide a holistic view of an individual’s ability to process information, make sound decisions, and learn quickly in new situations.

Why Reasoning Matters for Organizations

In modern organizations, employees are required to adapt to new technologies, complex data, and evolving business models. Leaders, in particular, must make rapid decisions based on incomplete information, often under pressure. Reasoning ability underpins:

- Talent Acquisition and Recruitment: Enables evidence-based hiring by identifying candidates with the cognitive capabilities needed for success in complex roles.

- Employee Development: Identifies strengths and development areas in reasoning and critical thinking, guiding personalized training and coaching initiatives.

- Succession Planning: Supports organizations in identifying high-potential employees capable of handling increased complexity and leadership roles.

- Team Effectiveness: Enhances understanding of cognitive diversity within teams, promoting better problem-solving and innovation through strategic team composition.

- Strategic Workforce Planning: Offers predictive insights to forecast and manage talent pipelines, facilitating strategic decision-making about skills, training needs, and talent investments.

Why Core Reasoning is Different

The Core Reasoning suite was designed not just to measure cognitive ability, but to do so in a way that is modern, ethical, and sustainable. Several features set it apart from traditional reasoning assessments:

Adaptive and Engaging

Traditional reasoning tests are often lengthy, static, and frustrating for candidates, with items that may feel too easy or impossibly difficult. Core Reasoning assessments instead use Computerized Adaptive Testing (CAT), dynamically adjusting item difficulty throughout the assessment to match each test-taker’s estimated ability. This approach, grounded in Item Response Theory (IRT), maximizes measurement precision at all ability levels while reducing test length to no more than 15 minutes for each assessment. Candidates are challenged appropriately, fatigue is minimized, and the resulting scores are both accurate and fair.

Fair and Accessible

Legacy reasoning tests have often been criticized for embedding cultural or educational biases. The Core Reasoning suite was designed with fairness at the forefront: items are written in plain, neutral language, screened for Differential Item Functioning (DIF), and tested against the four-fifths rule to monitor adverse impact. Accessibility standards such as screen-reader compatibility, contrast, and readability are embedded in the design. These safeguards ensure that observed score differences reflect genuine ability rather than irrelevant cultural or linguistic factors.

Scientifically Rigorous

The Core Reasoning suite is calibrated using modern psychometric models, specifically using 2-Parameter Logistic (2PL) IRT model. This model estimates both item difficulty and discrimination, ensuring accurate ability estimates (theta) and meaningful reliability metrics. Standard errors are tracked at the individual level, providing confidence intervals around scores. Combined with CAT, this ensures that every score reported to clients is both precise and defensible, even when based on fewer items than traditional tests.

Innovative and Evolving

Unlike static assessments with fixed item pools, Core Reasoning incorporates automatic item generation (in Logical Reasoning) and AI-assisted calibration (in Verbal and Numerical). For example, algorithmic rules generate Raven’s-style matrix problems across the full difficulty range, allowing the item bank to expand continuously. This innovation reduces item exposure risks and ensures test content remains secure, while simulations and recalibrations maintain consistent measurement precision over time.

Actionable and Ethical

Core Reasoning is designed with end-users in mind. Results are translated into normed percentile scores and clear interpretive ranges, giving organizations evidence-based insights for hiring, employee development, and workforce planning. All assessments are developed and validated in line with EEOC guidelines and international best practices, ensuring that organizations can make decisions that are both effective and ethically defensible.

Assessment Development & Methodology

The Core Reasoning suite was developed to combine scientific rigor with a candidate-friendly experience. Each assessment follows a consistent design philosophy, grounded in psychometric best practices and organizational fairness standards.

Item Development

The Core Reasoning suite was designed to minimize the cultural and demographic biases that have historically limited the fairness of cognitive assessments. Research shows that traditional ability tests can inadvertently disadvantage certain groups, reducing fairness and limiting the validity of interpretations (Sackett et al., 2022). To address this, items were developed with strict fairness and neutrality standards:

- Construct fidelity: Items are designed to measure reasoning ability directly, avoiding contamination from vocabulary breadth, cultural knowledge, or educational background.

- Range of difficulty: Item banks include content spanning very easy to very difficult levels, enabling accurate measurement across the full ability spectrum.

- Fairness and neutrality: Items undergo expert review and pilot testing for Differential Item Functioning (DIF) across gender, age, and ethnicity, ensuring that observed score differences reflect genuine ability.

- Continuous renewal: Automatic item generation (Logical Reasoning) and AI-assisted development (Numerical and Verbal) allow item banks to expand and refresh regularly, reducing exposure risks and enhancing security.

Assessment Calibration & Adaptive Test Design

All Core Reasoning assessments are built on a foundation of modern Item Response Theory (IRT) and Computerized Adaptive Testing (CAT), ensuring precise, efficient, and fair measurement of reasoning ability.

Item Response Theory Calibration

The CRLA assessment is calibrated using a 2-Parameter Logistic (2PL) IRT model, which estimates both item difficulty (β) and discrimination (α):

- Discrimination (α): Reflects how effectively an item distinguishes between individuals with different levels of ability.

- Difficulty (β): Indicates the ability level required to have a 50% chance of correctly solving the item.

The Numerical and Verbal Reasoning assessments have been calibrated using a 1-Parameter Logistic (1PL; Rasch) IRT model, which estimates item difficulty (β) while fixing discrimination (α) to 1 for all items. This approach was selected because, as newer assessments with smaller calibration samples, our primary priority was establishing robust and interpretable difficulty estimates across the item banks. The 1PL model provides stable, conservative calibration under these conditions, ensuring fair and consistent scoring while validation samples are still growing. As larger and more diverse data become available from client use, these assessments will be recalibrated using 2PL or higher-parameter models to capture both difficulty and discrimination with greater precision.

Calibration is carried out using Expectation-Maximization algorithms, providing accurate parameter estimates that reflect the underlying constructs being measured. This allows every item to contribute meaningful information about a candidate’s reasoning ability, and ensures that scores are both reliable and interpretable. The choice of the 2-Parameter Logistic (2PL) model is supported by extensive research demonstrating its effectiveness in cognitive ability testing and adaptive delivery (Embretson & Reise, 2000; Rust, Kosinski, & Stillwell, 2021; van der Linden, 2016).

Adaptive Test Design

The Core Reasoning suite uses CAT technology to tailor each assessment to the individual test-taker. Adaptive delivery dynamically selects items based on previous responses, adjusting item difficulty in real time to match estimated ability. This design:

- Maximizes precision by administering items that are most informative at a candidate’s ability level.

- Reduces test length and fatigue, typically requiring fewer than 15 minutes to complete.

- Creates a fairer experience, by avoiding sequences of items that are too easy or too difficult for a given individual.

Key features of the adaptive methodology include:

- Item Selection Criterion: Items are chosen using the Maximum Fisher Information method, optimizing the accuracy of ability estimates.

- Exposure Control: Approaches such as the Sympson-Hetter method are used to balance measurement precision with item bank security.

- Stopping Rules: Assessments terminate when measurement precision reaches a defined threshold (e.g., standard error < 0.35) or when a maximum of 30 items have been administered, balancing reliability with efficiency.

Fairness and Adverse Impact Assessment

Ensuring fairness is central to the design and use of the Core Reasoning suite. Research shows that cognitive ability assessments, when not carefully designed, can inadvertently disadvantage certain demographic groups, limiting both fairness and validity of interpretations (Sackett et al., 2022). To address this, fairness considerations were built into every stage of development, from item writing to large-scale calibration. All assessments in the Core Reasoning suite undergo:

- Differential Item Functioning (DIF) analysis across age, gender, and ethnicity.

- Adverse Impact Monitoring, including four-fifths rule simulations to ensure compliance with employment guidelines (e.g., EEOC) and group comparisons at item and scale levels.

- Accessibility reviews, including compatibility with screen readers, plain-language readability, and bias-free content.

Interpreting CRA Scores and Ethical Application

Each of the CRAs produce a percentile score, allowing an individual's performance to be compared against a normative group.

- Low Scores (below 33rd percentile): Individuals in this range may find roles requiring rapid learning, complex decision-making, or abstract problem-solving more challenging. However, they may thrive in roles that emphasize structured processes, consistent routines, and attention to detail, where reliability and steadiness are key strengths.

- Moderate Scores (33rd–66th percentile): Individuals scoring in the middle percentile range demonstrate well-developed, dependable reasoning ability across verbal, numerical, and abstract problem-solving tasks. They are generally able to understand new information, apply logical rules, and make sound judgments in situations that are structured or moderately complex. While they may take longer to adapt to highly ambiguous or rapidly changing situations than higher scorers, they typically show steady performance, good judgment, and reliable learning as experience and context increase.

- High Scores (above 66th percentile): Individuals scoring in this range typically demonstrate strong reasoning skills, adaptability, and the ability to learn quickly. They are often effective in dynamic or complex environments that demand flexibility, innovation, and problem-solving. To remain engaged, they may benefit from challenging tasks, opportunities for growth, and environments that encourage analytical thinking.

It is important to interpret CRA scores responsibly and contextually. Scores should not be used in isolation to make employment decisions unless rigorous local validation and adverse impact studies have been conducted. Deeper Signals can partner with organizations to carry out such studies.

Organizations using these assessments must ensure compliance with EEOC guidelines and avoid discriminatory practices, including regularly reviewing outcomes for adverse impact across demographic groups. Although validity studies are not required for tools that show no adverse impact on protected groups, best practice is to continue building evidence of construct and criterion validity over time. Deeper Signals recommends piloting the assessments and collecting local evidence before applying them to employee selection and development decisions.

Deeper Signals is also committed to algorithmic responsibility, conducting regular audits of its scoring models to ensure they meet industry standards for reliability, validity, and fairness.

When applying CRA scores in talent decision-making, it is recommended to use normed scores to ease interpretation and comparison. As a general guideline, a cutoff at the 25th percentile may be used to balance the need to identify individuals with the lowest scores while reducing the risk of adverse impact. However, cutoff scores should always be informed by job analysis and local validation studies to ensure the most suitable profile is applied in practice. Importantly, low scores do not imply negative, unproductive, or harmful behavior — they simply reflect different strengths and capabilities.

Psychometric Properties of Core Reasoning Assessments

Logical Reasoning

The Core Logical Reasoning Assessment (CRLA) is measured using abstract reasoning tasks inspired by Raven’s Progressive Matrices, which assess the ability to identify patterns and solve novel problems through nonverbal, visual stimuli (Raven, Raven, & Court, 1998). These matrices are widely recognized as the gold standard due to their high validity and low susceptibility to cultural biases. Items are deliberately non-verbal to minimize cultural and linguistic bias. The assessment covers two primary domains:

- Inductive and Deductive Reasoning: The ability to detect and apply logical rules in novel contexts.

- Visual–Spatial Reasoning: The ability to interpret abstract visual information and recognize spatial relationships.

A distinctive feature of the CLRA is the use of automatic item generation when designing the initial item bank. Algorithmic rules define how matrix-style reasoning problems are built, enabling the creation of new items across a broad difficulty range. These rules are based on findings of Carpenter et al. (1990) who defined a taxonomy for how progressive matrices can be constructed. This innovation ensures that the test bank can be continuously refreshed, reducing item exposure risks and maintaining fairness and precision over time.

To ensure robust calibration and validation, the CLRA underwent extensive empirical testing. Initial data collection efforts resulted in 11,284 candidate responses, averaging approximately 12.5 items per participant. After filtering responses based on strict criteria (such as minimum number of items answered and acceptable assessment durations), a final calibration sample of 4,410 participants remained. This sample was collected from a global population of 18-60 year olds, and is used to calculate percentile scores of ability (aka theta) scores.

Interpreting CLRA Scores

The CLRA produces percentile scores, allowing an individual's performance to be compared against a normative group.

- Low Scores (below the 33rd percentile): Individuals scoring in this range may find inductive and deductive reasoning tasks more challenging, such as recognizing abstract patterns, drawing logical conclusions, or generalizing rules to new problems. They may also have difficulty processing complex visual–spatial information under time pressure. However, they may perform well in structured roles where tasks are consistent, rules are explicit, and attention to detail is highly valued.

- Moderate Scores (33rd–66th percentile): Individuals scoring in the middle percentile range demonstrate solid, dependable reasoning ability. While they may take longer to adapt to highly complex or ambiguous situations than higher scorers, they typically show steady performance, good judgment, and reliable learning when given appropriate context, guidance, and experience

- High Scores (above the 66th percentile): Individuals in this range typically excel at identifying complex patterns, applying logical reasoning to novel situations, and interpreting abstract visual information quickly and accurately. They are likely to thrive in roles that require flexible problem-solving, rapid learning, and adaptation to dynamic or ambiguous contexts. These individuals may benefit from being given challenging, varied tasks that allow them to leverage their reasoning strengths.

Psychometric Properties

CLRA items exhibited a broad spectrum of difficulty and discrimination values, reflecting the intentional design to cover a wide range of cognitive ability. The difficulty parameter (β) ranged from -1.88 to +4.17, which means the test includes both relatively easy and highly challenging items. Discrimination values (α) ranged from .23 to 2.31, indicating the varying ability of items to differentiate between individuals of different reasoning abilities.

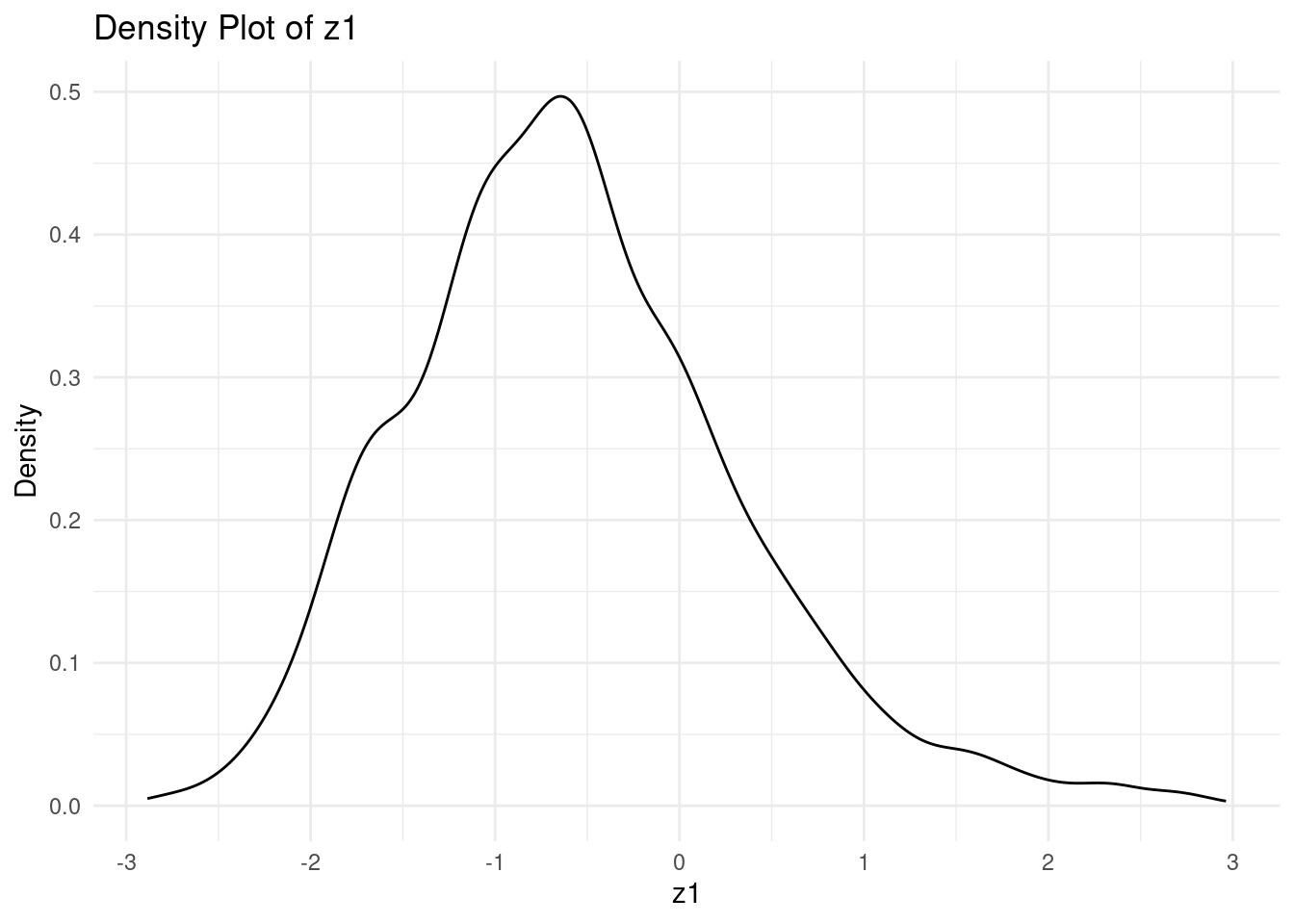

CLRA theta scores ranged from -2.886 to 2.960, with a mean of -.575 and standard deviation of .904. The distribution was slightly negatively skewed, indicating most participants found the assessment moderately challenging yet accessible with the assessment spanning a wide range of ability (see Fig. 1 for distribution of theta scores)

Fig 1. A density plot of CLRA theta scores

To assess the underlying structure of the CLRA, Exploratory Factor Analysis was conducted. The analysis revealed that the test is best described by a unidimensional factor structure. This finding is consistent with theoretical expectations, as the CLRA is designed to measure a single latent construct — logical reasoning ability. The unidimensionality assumption is further supported by the scree plot, which showed a clear inflection point after the first factor, and by eigenvalue analysis.

Numerical Reasoning

The Core Numerical Reasoning Assessment (CNRA) measures the ability to interpret, analyze, and draw logical conclusions from quantitative information. It is designed to assess fluid reasoning with numbers, focusing on problem-solving and logical inference rather than rote calculation. The assessment covers two primary domains:

- Quantitative Problem-Solving: The ability to apply arithmetic reasoning to structured problems (e.g., percentages, ratios, word problems).

- Logical Quantitative Reasoning: The ability to identify patterns and rules in number series or number matrices, requiring inductive and deductive reasoning with numerical stimuli.

Interpreting CNRA Scores

- Low Scores (below the 33rd percentile): Individuals in this range may find numerical problem-solving and abstract quantitative reasoning more challenging. They may struggle with identifying hidden rules in number patterns or interpreting data under time constraints, but can excel in tasks that are more structured, routine, and do not demand frequent quantitative inference.

- Moderate Scores (33rd–66th percentile): Individuals scoring in the middle percentile range demonstrate solid numerical reasoning ability. They are generally able to work accurately with familiar quantitative information, recognize straightforward patterns, and draw logical conclusions from structured numerical data. While they may be slower or less confident when faced with highly complex, abstract, or time-pressured quantitative problems than higher scorers, they typically show dependable performance and steady improvement with practice and contextual support.

- High Scores (above the 66th percentile): Individuals scoring in this range typically excel at extracting logical conclusions from numerical information, identifying patterns, and solving quantitative problems efficiently. They are likely to thrive in data-driven roles requiring rapid, accurate interpretation of numbers, financial or operational data, and structured problem-solving.

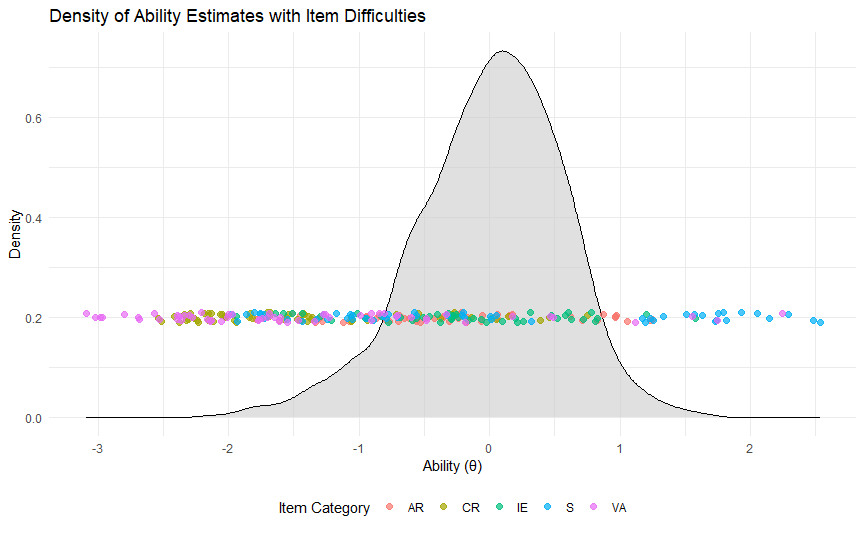

To ensure robust calibration and validation, the CNRA underwent extensive empirical testing. Initial data collection efforts resulted in 2734 candidate responses. This sample was collected from a global population of 18-60 year olds, and is used to calculate percentile scores of ability (aka theta) scores.

Psychometric Properties

CNRA has an item bank of 256 items, which exhibited a broad spectrum of difficulty and discrimination values, reflecting the intentional design to cover a wide range of cognitive ability. Initial validation on the CNRA assessment was done with 1 Parameter Logistic (1PL) modelling, where discrimination is fixed to alpha = 1 across all items as a simpler model due sample size captured for item validation. The difficulty parameter (β) ranged from -3.32 to +3.51, which means the test includes both highly easy and highly challenging items.

CNRA theta scores ranged from -3.11 to 2.94, with a mean of 0.001 and standard deviation of 1.04. The distribution was slightly negatively skewed, indicating most participants found the assessment moderately challenging yet accessible with the assessment spanning a wide range of ability.

Verbal Reasoning

Verbal Reasoning Construct

The Core Verbal Reasoning Assessment (CVRA) measures the ability to understand, analyze, and evaluate arguments and written information. It focuses on fluid reasoning with language, avoiding heavy reliance on vocabulary or cultural knowledge. The assessment covers five primary domains:

- Verbal Analogies: Measuring relational reasoning by mapping logical relationships between word pairs.

- Syllogisms: Assessing deductive logic by evaluating the validity of conclusions based on premises.

- Critical Reasoning & Inference: Drawing logical conclusions from short passages.

- Assumption Recognition: Identifying unstated premises necessary for arguments to hold.

- Argument Evaluation: Evaluating argument strength, identifying flaws, and interpreting evidence.

Interpreting CVRA Scores

- Low Scores (below the 33rd percentile): Individuals in this range may find it harder to evaluate arguments, recognize assumptions, or infer conclusions from written material. They may do better in roles with structured tasks and less emphasis on complex verbal reasoning.

- Moderate Scores (33rd–66th percentile): Individuals scoring in the middle percentile range demonstrate solid verbal reasoning ability. They are generally able to understand written information, follow arguments, and draw reasonable conclusions from text, particularly when ideas are clearly expressed and logically structured. While they may be less comfortable with highly abstract arguments, subtle logical flaws, or ambiguous language than higher scorers, they typically show reliable judgment and steady improvement as familiarity with the context or subject matter increases.

- High Scores (above the 66th percentile): Individuals scoring in this range typically excel at analyzing arguments, identifying logical flaws, and making reasoned judgments from text. They are likely to succeed in roles requiring critical thinking, decision-making, or leadership communication.

To ensure robust calibration and validation, the CNRA underwent extensive empirical testing. Initial data collection efforts resulted in 1609 candidate responses. This sample was collected from a global population of 18-60 year olds, and is used to calculate percentile scores of ability (aka theta) scores.

Psychometric Properties

CVRA item bank consisted of 240 items, which exhibited a broad spectrum of difficulty and discrimination values, reflecting the intentional design to cover a wide range of cognitive ability. Initial validation on the CVRA assessment was done with 1 Parameter Logistic (1PL) modelling, where discrimination is fixed to alpha = 1 across all items as a simpler model due sample size captured for item validation. The CVRA difficulty parameter (β) ranged from -3.09 to +2.53, which means the test includes both relatively easy and highly challenging items.

CVRA theta scores ranged from -2.13 to +1.657, with a mean of 0.0 and standard deviation of 0.566. The distribution was slightly negatively skewed, indicating most participants found the assessment moderately challenging yet accessible with the assessment spanning a wide range of ability (see Fig. 3 for distribution of theta scores)

Fig 3. A density plot of CVRA theta scores

Core Reasoning Assessment Studies

Convergent Validity Studies

Evidence for convergent validity was obtained by correlating Core Reasoning assessment scores with scores from the International Cognitive Logical reasoning Ability Resource (ICAR-16; Condon & Revelle, 2014), a widely used measure of cognitive ability. In a subsample of 655 participants who completed core Reasoning and ICAR assessments, CLRA theta scores demonstrated a strong positive correlation with ICAR-16 scores (see Table 1). This significant correlation indicates substantial overlap between the constructs measured by the two assessments, supporting the CLRA's validity as a measure of logical and abstract reasoning. Furthermore, this finding aligns with prior research linking performance on reasoning tasks to general cognitive ability.

Table 1: Correlation between the Core Logical Reasoning Assessment and ICAR-16

For the Core Numerical Reasoning Assessment (CNRA) and Core Verbal Reasoning Assessment (CVRA), convergent validity analyses have not yet been completed. As newer assessments, these measures are still in the early stages of large-scale data collection. Establishing convergent validity evidence for both is a high priority on our research roadmap, and studies are already planned to compare CNRA and CVRA scores with established measures of cognitive ability. These results will be added to future versions of this technical manual, ensuring the same level of scientific evidence and transparency as is available for the CLRA.

Group Differences & Adverse Impact Simulations

Group Differences

Independent samples t-tests were conducted to investigate whether males and females, under/over 40-years old, and White and Non-White individuals scored significantly different across on CRA theta scores. Cohen’s d was also computed to understand to what extent are such differences practically meaningful. As illustrated in Table 2, 3, and 4, there are no statistically significant or practically meaningful differences in theta scores across Males vs. Females and White and Non-White respondents. There was a statistically significant difference between under and over 40-year olds, however Cohen’s d would suggest that this difference is practically small.

Although these analyses reveal no to small group differences, they do not identify or confirm assessment bias. To empirically test this, adverse impact analyses were conducted.

Table 2: Tests of Group Differences in CLRA Theta Scores

Table 3: Tests of Group Differences in CNRA Theta Scores

Table 4: Tests of Group Differences in CVRA Theta Scores

Adverse Impact Simulations

Adverse Impact (AI) can be defined as “a substantially different rate of selection in hiring, promotion, or other employment decisions which works to the disadvantage of members of a race, sex or ethnic group” (see section 1607.16 of the Uniform Guidelines on Employee Selection Procedures; Equal Employment Opportunity Commission, Civil Service Commission, U.S. Department of Labor, 1978). The “Four-Fifths rule” can be used to determine whether an assessment has AI. Specifically, when the “selection rate for any race, sex or ethnic group which is less than four-fifths (4/5) (or eighty percent) of the rate for the group with the highest rate will generally be regarded by the Federal enforcement agencies as evidence of adverse impact.” (1978, see section 1607.4 D). Furthermore, given the Age Discrimination in Employment Act states that individuals over 40 years old need protection, assessments should not adversely impact younger or older individuals (Age Discrimination in Employment Act of 1967).

While the previous analyses demonstrated no to small group differences, AI simulations of the 4/5ths rule were conducted to further demonstrate that CRA scores do not adversely impact protected groups. To test for AI, we compared the selection rate of protected groups (e.g. females, over 40 year olds & non-white individuals) against the selection rate of non-protected groups (e.g. males, under 40 year olds & white individuals). Ratios greater than or equal to .80 indicate that the assessment has no AI.

Using a cutoff score of 25%, we conducted AI simulations for three demographic dimensions: age, gender, and ethnicity. Table 5 contains the results of our AI analysis. Across all simulations, the AI ratio was equal to or greater than .80 across each scale and demographic group.

Table 5: Adverse Impact Simulations

Assessment Enhancement & Validation Roadmap

The Core Reasoning suite is built on rigorous foundations, but Deeper Signals is committed to continuous improvement. Future research and development efforts will focus on three key priorities:

Building Better, Fairer, and More Representative Assessments

Deeper Signals will continue to expand and refine the Core Reasoning item banks to strengthen measurement precision and security. Efforts will focus on:

- Expanding item pools using a combination of automatic item generation (Logical) and AI-assisted item writing (Numerical and Verbal), ensuring broader coverage across ability levels.

- Ongoing fairness audits, including Differential Item Functioning (DIF) analyses and adverse impact simulations, to ensure assessments remain equitable across age, gender, ethnicity, and other demographic groups.

- Expanding normative samples across geographies, industries, and career levels, enabling more representative benchmarks and context-specific norms for organizations.

The objective is to create a continuously improving assessment experience that is not only more precise and secure, but also fair and representative of the diverse global workforce.

Validation Studies to Build Credibility and Differentiation

Validation research will continue to strengthen the evidence base for the Core Reasoning suite, with two complementary goals:

- Suite-level validation: Examining the predictive power of general reasoning ability (the combined competency across Logical, Numerical, and Verbal reasoning) for workplace outcomes such as job performance, adaptability, and leadership potential.

- Facet-level validation: Demonstrating the unique predictive contributions of each reasoning domain, showing how Logical, Numerical, and Verbal reasoning differentiate between workplace capabilities (e.g., problem-solving, quantitative analysis, communication, strategic decision-making).

By addressing the bandwidth–fidelity trade-off, these studies will highlight both the breadth of general reasoning and the specificity of individual reasoning abilities, providing organizations with evidence-based insights for different talent decisions.

Continuous Innovation

Deeper Signals is committed to exploring new methodologies to enhance the quality and impact of its assessments. Planned innovations include:

- AI validation studies, testing whether large language models can simulate human response patterns to support item calibration and parameter estimation.

- Dynamic scoring models that incorporate additional data, such as reaction times, to refine precision and detect response patterns.

- Next-generation assessment design, leveraging advances in AI to generate new item types, reduce exposure risks, and expand construct coverage.

These initiatives ensure the Core Reasoning suite remains at the forefront of innovation, combining psychometric rigor with cutting-edge technology.

The CLRA is a new assessment, and as of September 2025, its development and validation remains an ongoing, iterative process. To continually enhance the utility, and predictive validity of the CLRA, we plan to implement the following initiatives:

- Expansion of Item Pool: We will regularly generate and validate additional assessment items designed to cover an even broader spectrum of cognitive abilities, improving both the precision and accuracy of cognitive measurements.

- Normative Data Enhancement: We aim to expand our normative data, developing larger, more diverse, and representative norms. This effort will establish robust benchmarks across different regions, job roles, and organizational levels.

- Continuous Calibration & Fairness Testing: Ongoing recalibration of existing items will be conducted to maintain measurement accuracy. Additionally, routine analyses will identify and mitigate adverse impacts, ensuring compliance with EEOC guidelines and equitable assessment outcomes across demographic groups.

- Validation Research Expansion: We plan extensive validation research aimed at demonstrating the criterion-related validity of the CLRA. This includes establishing the predictive capability of the assessment in relation to job performance and key organizational outcomes across various roles and industries.

We actively invite collaboration from researchers and enterprises interested in advancing the CLRA’s psychometric rigor and practical impact. Our commitment is to consistently enhance the CLRA to better serve the diverse needs of modern organizations and their talent management strategies.

References

Age Discrimination in Employment Act of 1967. , Pub. L. No. Pub. L. No. 90-202, et seq (1967).

Carpenter, P. A., Just, M. A., & Shell, P. (1990). What one intelligence test measures: a theoretical account of the processing in the Raven Progressive Matrices Test. Psychological review, 97(3), 404.

Carroll, J. B. (1993). Human cognitive abilities: A survey of factor-analytic studies. Cambridge University Press.

Cattell, R. B. (1963). Theory of fluid and crystallized intelligence: A critical experiment. Journal of Educational Psychology, 54(1), 1–22.

Chamorro-Premuzic, T., & Furnham, A. (2010). The psychology of personnel selection. Cambridge University Press.

Condon, D. M., & Revelle, W. (2014). The international cognitive ability resource: Development and initial validation of a public-domain measure. Intelligence, 43, 52-64.

Embretson, S. E., & Reise, S. P. (2013). Item response theory for psychologists. Psychology Press.

Equal Employment Opportunity Commission, Civil Service Commission, U.S. Department of Labor, & U. S. D. of J. (1978). Uniform guidelines on employee selection procedures. Federal Register, 43, 38290–38309.

Judge, T. A., Colbert, A. E., & Ilies, R. (2004). Intelligence and leadership: A quantitative review and test of theoretical propositions. Journal of Applied Psychology, 89(3), 542–552.

Raven, J., Raven, J. C., & Court, J. H. (1998). Manual for Raven's progressive matrices and vocabulary scales. Oxford Psychologists Press.

Rust, J., Kosinski, M., & Stillwell, D. (2021). Modern psychometrics: The science of psychological assessment (4th ed.). Routledge.

Sackett, P. R., Zhang, C., Berry, C. M., & Lievens, F. (2022). Revisiting meta-analytic estimates of validity in personnel selection: Addressing systematic overcorrection for restriction of range. Journal of Applied Psychology, 107(11), 2040–2068.

Schmidt, F. L., & Hunter, J. E. (1998). The validity and utility of selection methods in personnel psychology: Practical and theoretical implications of 85 years of research findings. Psychological Bulletin, 124(2), 262–274.

Van der Linden, W. J., & van der Linden, W. (Eds.). (2016). Handbook of item response theory (Vol. 1). New York: CRC press.

Zeidner, M. (1998). Test anxiety: The state of the art. Plenum Press.